Abstract

Bayesian Networks are a tool of the new application to the question of risks, in particular for modeling operational risk. Its use for measuring operational risk in the financial sector has channeled large efforts in developing new methods that measure this type of risk which allow improving the internal gestation of the operational processes. Applying Bayesian Networks for modeling operational risk presents the opportunity to incorporate elements of qualitative analysis as well as the opinion of experts in the process of selecting interest variables, defining the structure of the model through its dependencies of causality, such as the specification of a priori distributions and conditional probabilities of each node. It has been found that Bayesian models that incorporate data, as well as expert judgment (especially about causality), work better than any other method applicable in the field.

Introduction

In finance, delinquency, temporary insolvency, restructuring or bankruptcy itself is the difficulties of companies that have serious significance to its economic environment. These issues are much more even in periods in which the economic environment adversely increasing the mortality rate of the companies. That is why the study of the conditions of solvency and financial stability of the company, and its application to the prognosis of financial failure events, have been recurring issues in the financial literature. There are many ways to make this forecast. The statistical methods of multivariate discriminant analysis proposed by Altman (1968) or the conditional LOGIT proposed by Ohlson are still used widely; however, the little-structured nature of the problem has led to the development of analysis methods more flexible that heuristics and interactive learning processes have an important role. It has been suggested the use of the statistical Bayesian and trees of classification, as well as strategies based on the combination of methods Multi-criteria and group decision.

Review

Bayesian is implemented in finance in different ways. It is also used to implement a classifier of bank loans through the Bayesian approach. With the information provided by the customer requesting the credit, applied to the historical database that has the Bank, the model suggests the Manager a first decision on the acceptance or not of the customer’s request (model of credit scoring). This article proposes a prediction system that optimizes the statistical decision that determines the class. The models of credit scoring help at first to make the decision of whether or not to grant credit and even allow justifying it. However, along with results, it should consider other qualitative dimensions that must necessarily complement the decision-making. This involves decisions that cannot be studied with mathematical models.

One should have a good method that will help us to make more correct decisions can improve the efficiency of the management of a Bank, being of special interest in a situation like the current one, in which financial institutions is demanding a further analysis of the risk and an improvement in the efficiency of its management.

The ways to confront the problem of classification are varied. The great diversity of existing techniques can incorporate statistical analysis, mining tools in data, or artificial intelligence with machine learning; the more classical technique problems of credit scoring has been the logistic regression, which generally provides good statistical results. Another classic approach is to synthesize the information from the database of customers through rules and decision trees; Finally, other innovative approaches employed in models of credit scoring are based on the application of neural networks, evolutionary algorithms implementing, splines adaptive regression, the vector support or fuzzy logic machines.

Situations in which human beings make decisions can be classified according to the knowledge and control you have over the variables that intervene or influence the problem in 3 categories: certainty, risk (the problem is known, possible solutions are known, the results that can be shed, are not known with certainty but the probability of each outcome occurring) and uncertainty (have poor information to make the decision (do not have any control over the situation, not known how may vary or the interaction of the variables of the problem, may raise different alternative solutions but not can be assigned probability outcomes which will deliver). Indecision theory also classified as structured and unstructured uncertainty, the transition from situations of uncertainty to risk situations, i.e., the quantification of the likelihood of occurrence of a certain solution is vital in economic decision-making. In many cases, the difference between the success and failure of the company lies in handling the activity of bank customers. Therefore, the availability of a good mechanism that ventures the probability that a customer returns a credit is of paramount interest to a financial institution. This mechanism should be relatively easy that incorporate more complex modules with access to the centers of address or points where the latest or most important decisions are taken.

Bayesian techniques and methods provide these utilities. These can be simple construction, with clear semantics, and have an approach that is solid and elegant; they have traditionally presented the problem of its high computational cost, a problem that technological progress is contributing to solving quickly and effectively.

Bayesian models serve both to solve problems from a descriptive and predictive perspective. From this standpoint, we can say that sometimes they complement or they even outnumber the rules of Association. In terms of predictive function, it is confined to the Bayesian techniques as methods of classification.

Mitchell reasons that the Bayesian methods are some of the techniques that have been most used in artificial intelligence, machine learning, and data mining problems suggest:

- BN is a very valid and practical method for making inferences with the data which means to induce probabilistic models which, once calculated, can be used with other data mining techniques.

- BN is extremely useful in the understanding of other techniques of artificial intelligence and data mining not working with probabilities that give us the Bayesian techniques. This combination of methods is very helpful to optimize the solutions to some problems in data mining.

It has been commented that to obtain a network Bayesian must be specified a graphic structure and a probability function joint that is specified by the product of the probabilities of each node given its parents, which means that in the majority of cases not known neither the structure nor the odds. This is the reason why different learning methods have been developed to obtain the Bayesian network known data.

Learning tasks which face the different methods can be divided into structural learning and parametric learning.

In structural learning, the dependency relationships that exist between the variables in the data set to get the best graph that represents these relationships are established. This problem, as it has already stated above, is quite complex, since the search structure representing us better data is an NP-complete problem, which makes it computationally intractable when the number of variables is large. Often they seek efficient algorithms which, although are not optimal, yet approaching the solution sought with limited computational costs (Neapolitan, 2003).

Basically, the structure learning methods are of two types. Methods that use setting and algorithms of search metrics are, on the one hand. The metric defines the quality of the Bayesian-based on the data network and the search algorithm will try to find the network that maximizes this metric exploring all the possibilities. It should take into account that the number of possible graphic structures greatly increases with the number of variables. Depending on the metric used and search technique; there is a wide range of procedures that can range from simple greedy methods to methods that use genetic algorithms.

Other methods are based on a statistical test to detect possible dependencies/independence present in the data, so the network would follow these discovered dependencies. These methods seem to be more efficient, but they can be very sensitive to test failure, especially when many variables are involved in the problem. Both strategies can also be used to optimize the search and build the graph.

Once known the Bayesian network structure, the estimation of the parameters of the network problem boils down to calculating the probability function posteriori. Parameters consist of odds priori and Conditional probabilities of the other variables, given its parent nodes and root nodes.

The Bayesian approach is a viable alternative for the analysis of risks under conditions of uncertainty in the finance sector. By construction, the Bayesian models incorporate initial information through the distribution of a priori probability. Subjective information can be included in the decision-making process as the opinion of experts, the judgment of analysts or the beliefs of specialists. This work uses a Bayesian network model to examine the interrelationship of risk factors in the process of the program of direct support, Procampo payment. The bayesian model proposed is calibrated with observed data in events that arose during the process of obtaining information.

The possibility of using conditional, discrete, or continuous distribution functions, calibrate the model with information sources, both objective and subjective, and establish a causal relationship between risk factors. These are the features that distinguish precisely this research compared with the classical statistical models. This work is organized in the following way. First, there is the terminology that will be used for the calculation of RO in accordance with processes and related to payment systems. Later, it discusses the framework for the development of the work, emphasizing the features and benefits of the RB. Subsequently, it presents the problems that it intends to solve, as well as the scope of application of the proposed methodology. Two networks were built: one for the frequency and another for the severity; to quantify each node in the network and obtain a priori probabilities and ‘conform’ probability distributions for cases where there is historical information; otherwise it resorts to opinion or judgment of experts to obtain the corresponding odds. Then it proceeds to calculate the odds later through Bayesian inference algorithms, in specific the junction tree. The algorithm is used then and expected operational conditional risk with a posteriori distribution is calculated for frequency and severity. Moreover, the estimated maximum loss expected is calculated with the classic model, and both results are compared. Finally, the main conclusions of this work are presented.

The payment system was gradually developed by support and services during the agricultural commercialization. Federal resources derived from the operation of Pro-campo to integrate the register of beneficiaries of this program with more than 2.7 million producers and with a supporting surface of approximately 14 million cultivable hectares — distributed 4.2 million farms — the most complete database of the sector was created. Therefore, Pro-campo and the payment system that accompanies it have the same temporal origin and the same causal explanation; However, additional and progressively, this system and its vast database were exploited to the issuance and distribution of support of other programs of the Secretariat as a stimulus program to livestock productivity support to the coffee, marketing, traces TIF (Federal inspection type), agricultural energy, among others (BCBS, 2006).

The payments system is the instrument that has the Secretariat of agriculture, livestock, Rural Development, fishing, and food to administer the payment of allowances of different programs. It is structured in three subsystems: governance, substantive processes, and information technology. From the point of view of governance, the payment of allowances relies on the payment system for the fulfillment of the objectives and lines of action laid down in the sectorial agricultural and fisheries development program, inside of which stands out: i). implement new schemes of Pro-campo and Pro-gan (a program of sustainable livestock production and livestock management and beekeeping) with new rules of operation. ii). establish a system that uses banking services.

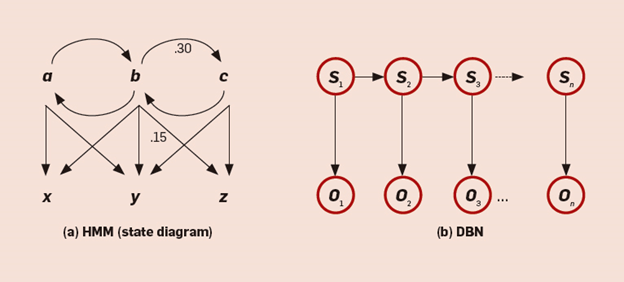

A Bayesian network, Bayes network (RB) is a graph that represents the domain of the variables of a decision, the quantitative and qualitative relations of these, and their measures of probability. In addition, one can include utility functions that represent the preferences of the decision-maker. An important feature of the RB is its graphic form, which allows the representation of complicated probabilistic reasoning in a visual way (Madsen, and Kjaerulff, 2008). Another aspect to highlight is its quantitative part because they allow incorporating subjective elements such as the opinion of experts, as well as 9-based odds. Perhaps the most important feature is that they are a direct representation of the real world and not a process of reasoning. Bayesian Networks are graphic directed acyclic (GDA). A graph is defined as a set of nodes connected by arcs. If there is a relationship of precedence represented by arches between each pair of nodes, then the graph is directed. A cycle is a path that starts and ends on the same node. A path is a series of contiguous nodes connected by directed arcs. Each node in RB is associated with a set of tables of probabilities. The nodes represent the variables of interest, which can be discrete or continuous. A causal network, according to Pearl (2000), is RB with the additional property (Chonawee, Chris K and Lucas, 2006).

A Bayesian network is used basically for inference through the calculation of the Conditional probabilities given the information available so far for each node (beliefs). There are two kinds of algorithms for the inference process: the first generates an accurate solution, and the second produces a solution with high probability rough (Neil, Fenton, and Tailor, 2005). The exact inference algorithms are, for example, poly-tree, click tree, tree, algorithms junction of variable elimination and method of Pear (Cowell, Dawid, Lauritzen, and Spiegelhalter, 1999).

The first step is to define the domain of the problem were specifying the purpose of the Operational risk in the process of the Pro-campo payment. Then identify the variables or important nodes in the domain of the problem. After the interrelation between nodes, the resulting model should be validated by experts in the field. In a case of disagreement between them, we return to one of the above steps to reach a consensus. The last three steps are to incorporate the view of experts, create plausible scenarios with the network (of networks applications), and to fine-tune estimates in time

The main problems faced by an administrator of risk employing are how to implement a Bayesian network, how to model its structure, how to quantify it, how to use subjective data (of experts) or objectives? What instruments should be used for best results, how to validate the model? The answers to these questions can be addressed in the application of the Bayesian model.

The main objective of the application is to prepare a guide to implement RB to measure operational risk in the process of banking. In addition, it intends to generate a consistent measure of capital economic need to deal with losses from risk events

Scope of Application

The case study focuses on the analysis of operational risk events. These arose during the dispersion of resources for the payment of support from banks contracted for this service. Once identified, risk factors associated with each phase of the process, nodes that are defined they were part of the Bayesian network and are random variables which can be discrete or continuous, which have associated probability distributions. In the case that has historical data related to the nodes (variables random) a distribution function; fits them otherwise, it is used to determine the probability of occurrence or any known probability function parameter. The available data are bi-weekly and cover the period from 2008 to 2011; the maximum loss is calculated, expected biweekly derived from faults in Pro-campo payment processing by electronic transfer.

The selected nodes are connected with arcs directed (with arrows) to form a structure that demonstrates the dependence or causal relationship between these as shown in figure 1. It is divided into two networks: one to model the frequency and the other to the severity. This facilitates its analysis, because once the results separately added by the method of Monte Carlo simulation for the loss expected.

The entire network of the frequency is generated from the analysis of risk factors related to banking. These factors are presented during the process of dispersion of resources (electronic transfer accounts of beneficiaries) that are operated through the banking sector. The risks were classified in the following way:

The network of severity is made up of four nodes. The tagged node as the severity of the loss is the additional cost which is incurred by not making the successful transfers, which obliges to pay via checking; the other nodes are considered informative variables.

They were used to quantify objective data as subjective. The proper functioning of the banking institutions depends on the performance of their processes; the maturity of these is associated with the quality management systems in the level process and product. With respect to the odds of the node labeled the performance efficiency are conditioned to the odds of the performance of process nodes and human resource. There is a conditional probability of 20% — since the human resource failure does not have sufficient budgetary resources and that process canceled the account. Conditional probabilities read similarly. With respect to the node labeled systems, are errors generated by the Bank information systems that have the following probability distribution which was calculated with qualitative information provided by the experts. Finally, the target node failure frequency is a function of negative binomial distribution with probability of success p = 0.51 and hits limit equal to 1,000; This assumption is consistent with the financial practice and studies of the operational risk showing breakdowns usually follow a negative binomial or Poisson distribution. To estimate the value of the for-meters resorted to experts and complemented with the analysis of other nodes in the network.

This section discusses each node in the network of severity. In the case of nodes, the corresponding probability distribution is adjusted with available historical information and the required chances are calculated. When there is insufficient data, it gets information from the experts or external.

The Kolmogorov-Smirnov test was performed to determine the goodness of fit with the following values: D = 0.0811, p-value = 0.77. In other words, it is accepted the null hypothesis that the sample comes from a Lognormal. Therefore, it calculates the table of probability for this same severity than the network node. From the analysis, there is a probability of 86% of which are lost up to 500 pesos for failures in systems, 9% chance to miss, between 500 and 1 000 pesos, 2.5% of loss is between 1 000 and 1 500 pesos and 2.5% probability that the loss is greater than 1 500 pesos in a period of 15 days. It then analyzes the human error node, which has shown more losses caused by human error. Finally, the target node loss severity represents the losses associated with node failures in systems, human error, and events in processes. A function was used for the calculation of conditional probability table of Lognormal distribution with parameters mean log (x) = 8.13 and deviation standard. Then odds are generated subsequent to which Bayesian inference techniques are used.

Once analyzed each of the nodes (continuous or discrete random variables) networks both frequency and severity and assigned the corresponding probability distribution functions, are generated chances afterward. These inference techniques are used for Bayesian Networks, in particular, called the junction tree algorithm.

The efficiency of the performance node results shows that there is a likelihood of 24% that has an excellent performance. In addition, a probability of 12% of under-performing problems and a 64% chance that the performance is poor. The calculated odds are conditioned by the performance of the processes. As for the node performance of processes, the likelihood that there are accounts canceled 81%, that account is in another currency is 10%, and that the account does not exist is 9%; this conditioned that there were failures in the human resource.

Finally, the distribution of probabilities of the node of the interest rate of fault shows an 81% chance that occurs less than 1 000 failures, a 19% chance of having between 1 000 and 2 000 faults, and one null probability that occurs more than 2,000 failures; These odds are conditioned to the misunderstandings of systems and the efficiency of processes and reliability of the people.

A binomial is used for the calculation of the probabilities of the node of negative interest with parameters number of successes = 1000 and the probability of success p = 0.51, which is consistent with the empirical evidence of the frequency of events of operational risk have a fit right under this distribution. Losses caused by human errors on average are 673 pesos every two weeks; with regard to the losses by events in the process are 8 554 bi-weekly. It has a bi-weekly loss of 350 pesos. The probability distribution of the node of interest loss severity shows a probability of 59.7% of which the loss is between 0 and 5 000 pesos, a probability of 15.69% of which is between 5 000 and 10 000 pesos, a probability of 16.31% that is between 10 000 and 30 000 pesos, a probability of the 3.93% of which is between 30 000 and 50 000 weights, and a probability of the 4.33% that the loss is greater than 50 000 pesos bi-weekly.

Calculation of the Operational Risk (Op-VaR) Value

Once the Bayesian inference for the probability distributions of the frequency and severity of losses are obtained, these are integrated to generate the distribution of potential losses through a process of simulation Monte Carlo.

To validate the results of the Bayesian model it is estimated with classical models using the distribution of probabilities for the frequency and severity. Subsequently, through Monte Carlo simulation, both distributions are integrated to obtain Position (pesos) percentage loss. Finally, operational risk is calculated with the distribution of losses estimated in classic form and compares the results with those obtained with the Bayesian model.

The change in payment increased transparency and timeliness of the delivery of aid to beneficiaries of the program. However, as demonstrated in the development of this work, there are risk factors associated with the process of dispersion of resources that prevent delivery of these through the deposit to the account of the beneficiaries, which requires making the payment through checks, generating an additional cost for each, not a successful transaction. Risk factors that most impact are related to internal activities for the payment of support, which can be mitigated with improvements to the processes of dispersion and updating of the register, as well as continuing with the policy of banking.

In congruence with the above, this work provides the necessary theoretical elements, as well as a practical guide to identifying measure, quantify and manage the RO in the process of dispersion of resources (electronic transfer) with a Bayesian approach. Also, Bayesian Networks are a viable option for managing operational risk in an environment of uncertainty and limited information or of questionable quality. The capital required in operational risk calculated based on the assumption of the interrelation of risk factors (cause and effect), which is consistent with reality. Bayesian Networks are based on efficient algorithms of propagation of evidence that dynamically update the model with current data. In the case of the object of study of this work, it was possible to build the RB and to calculate the capital required to manage operational risk by combining statistical data, as well as opinions or judgments of experts or external information. The VaR calculated with the Bayesian approach is consistent in the sense of Artzner (1998) but also outlines the complex causal relationships among different risk factors resulting in an operational risk event. In summary, since the reality is much more complex than independent identically distributed events, the Bayesian approach is an alternative to model a complex and dynamic reality.

Recommended Reading

- Basel Committee on Banking Supervision (BCBS) (2006) International Convergence of Capital.

- Measurement and Capital Standards, Bank for International Settlements.

- Chonawee S. Chris K and Lucas H. (2006) “Cause to Effect Operational Risk Quantification and Management”, Risk Management.

- Cowell, R.G., A.P. Dawid S.L. Lauritzen and Spiegelhalter, (1999)”Probabilistic Networks and expert Systems”, Springer-Verlag.

- Madsen, and Kjaerulff (2008) “Bayesian Networks and Influence Diagrams” Springer.

- Neil, M.N., Fenton and M. Tailor. (2005) “Using Bayesian Networks to model expected and unexpected operational losses” Risk Analysis Journal.